CData Software Acquires Data Virtuality to Modernize Data Virtualization for the Enterprise

Data Virtuality brings enterprise data virtualization capabilities to CData, delivering highly-performant access to live data at any scale.

Explore how you can use the Data Virtuality Platform in different scenarios.

Learn more about the Data Virtuality Platform or to make your work with Data Virtuality even more successful.

Insights on product updates, useful guides and further informative articles.

Find insightful webinars, whitepapers and ebooks in our resource library.

Stronger together. Learn more about our partner programs.

Read, watch and learn what our customers achieved with Data Virtuality.

In our documentation you will find everything to know about our products

Read the answers to frequently asked questions about Data Virtuality.

In this on-demand webinar, we look at how a modern data architecture can help data scientists to be faster and to work more efficiently.

Learn more about us as a company, our strong partner ecosystem and our career opportunities.

How we achieve data security and transparency

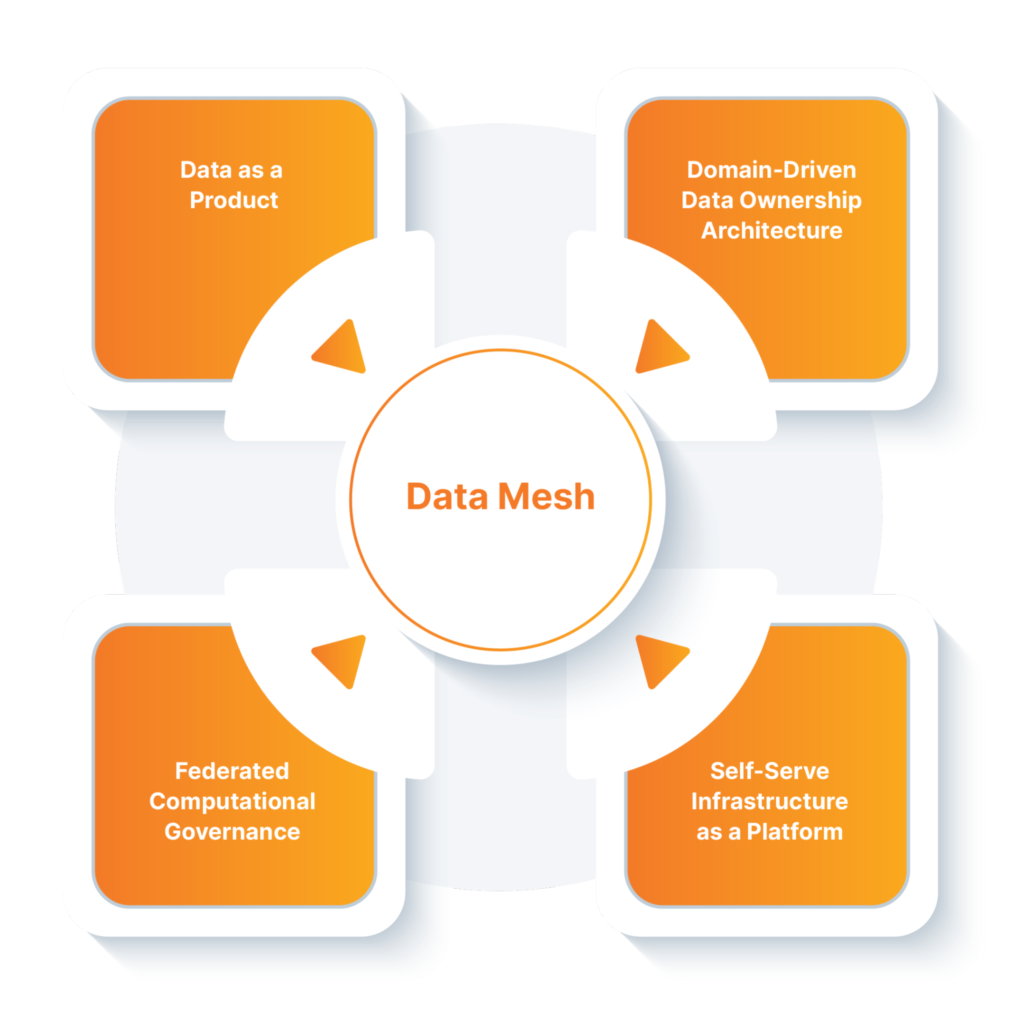

Data Mesh is a socio-technical data management paradigm proposed by Zhamak Dehghani. It challenges the traditional monolithic data architectures that were rather technology-focused with a business-centric decentralizing approach to ultimately make the data usable in companies. Data Mesh confronts the current enterprise data architecture to:

Zhamak Dehghani who coined Data Mesh defines this concept as follows: “A data mesh considers domains as a first-class concern, applies platform thinking to create self-serve data infrastructure, treats data as a product, and introduces a federated and computational model of data governance.“

The efforts around Data Mesh aren’t new. Like the concepts Data Fabric, Logical Data Warehouse, Data Lakehouse, etc, the ultimate goal of Data Mesh is to make the data accessible and usable for all users, especially the business side.

We will go more in-depth about data as a product later.

Big drivers of Data Mesh are communities. Communities helped to be effective in creating new data and partner ecosystems and spread the word about this new concept. The enablements of data sharing, insight sharing, co-development of models, and data-driven products as well as services are all elements that laid the foundation of Data Mesh.

What creates quite a confusion about this concept is the fact that Data Mesh drifts away from technology. It is often misunderstood that technology can be neglected. But to enable Data Mesh, discussions about technology cannot be ignored. Only with the right data integration, management, governance,… tools, a user-centric approach can be provided.

Watch this video to learn more:

To enable the paradigm shift of moving beyond the traditional monolithic approaches, the data mesh is based on four key pillars.

“Data Mesh is the approach, Data Fabric is the platform.” Unlike the public voices that imply contradiction between data fabric and data mesh, these two concepts actually lead in the same direction.

Companies often start by deploying a data fabric and get some first value from that. With the priority on the flow and pathways of data, the elements of data integration, schema governance, and types of pipeline capabilities (ETL/ELT, data virtualization, microservices, APIs, streaming, etc.) in the context of SLAs, etc. is defined in Data Fabric. In the next step, the business outcomes, and time to value are added with Data Mesh.

Watch this video to learn more about the full evolution of Data Mesh:

“A data fabric is a technology-enabled implementation capable of many outputs, only one of which is data products. A data mesh is a solution architecture for the specific goal of building business-focused data products.”

If you want to better understand the differences and similarities of Data Mesh and Data Fabric, watch this video:

The Data Mesh concept differs from the other ones as it is technology-agnostic and focuses more on the human/socio part of the data management challenges. The data mesh framework claims that the previous concepts concentrated too much on the technology and thereby missed to fully understand and address the needs of the business that ultimately uses the data for insights. With the decentralized approach, the data mesh tries to bridge the gap between the business needs and the technology. On the technical side, it recognizes and respects the distributed nature and topology of the data and the different use cases that it can enable. On the human side, it looks at the individual personas of data consumers, their diverse access patterns, and their domain-specific knowledge.

| Pillar | Pro | Con |

| Domain-oriented decentralized data ownership and architecture | Removes the bottleneck of centralized infrastructures: a separate entity that takes care of all tasks related to data management isn’t needed, e.g., data scientists looking for data in a data lake environment. | A decentralized approach can lead to an increasing number of data silos and suffering data quality and a decrease in data quality => data needs to be treated differently. |

| Data as a product | This is mainly a philosophical shift, so no technology has to be bought for this. | Changing people’s approach to data takes time. |

| Self-service data infrastructure as a platform | Business becomes less dependent on IT and, therefore, is more agile. | Managing data infrastructures is complex and requires special skills which won’t exist in all domains. In order to still enable the different domains. |

| Federated computational governance | Ensure that rules and regulations are adhered to, and the company is compliant. | Difficult to ensure a healthy and interoperable ecosystem in this decentralized set up. |

This framework surely has a lot of potentials. However, it is still in an early emerging stage with very little real-life implementations. Many weaknesses and challenges are still unknown and unclear. It will be interesting to see how it evolves in the next few years!

All data-related use cases can benefit. But when you take a closer look, use cases with analytical and transactional systems in which digital systems are embedded with intelligence are most favorable to Data Mesh. Below, you can find an exemplary list of use cases:

More automated processes providing better personalized and contextualized customer experience. Results are reduced average handling time, increased first contact resolution, and improved customer satisfaction.

Marketing teams are enabled to run the targeted campaigns to the right customer, at the right time, and via the right channels.

Customer data can be protected by complying with the ever-emerging regional data privacy laws, like GDPR. Security rules can be easily applied through the integration of external data governance, policy, and security tools (such as Collibra) on the global level prior to making it available to data consumers in the business domains.

Insights into the device usage patterns help to continually improve product adoption and profitability.

Machine learning (ML) and artificial intelligence (AI) models can be easily fed with data from different sources to help them learn, without running the data through a central place.

Data latency can be reduced by providing instant access to query data from proximate geographies without access limitations.

The domain-oriented decentralization allows to analyze the data and run fraudulent behaviour models on a local level and thereby, detect and prevent fraud in real-time.

The combination of decentralized data ownership and federated governance allows data sovereignty and data residency on a regional level while complying with data governance rules on the global level.

Book a demo and learn how you can enable Data Mesh with the Data Virtuality Platform:

Data Virtuality brings enterprise data virtualization capabilities to CData, delivering highly-performant access to live data at any scale.

Discover how integrating data warehouse automation with data virtualization can lead to better managed and optimized data workflows.

Discover how our ChatGPT powered SQL AI Assistant can help Data Virtuality users boost their performance when working with data.

While caching offers certain advantages, it’s not a one-size-fits-all solution. To comprehensively meet business requirements, combining data virtualization with replication is key.

Explore the potential of Data Virtuality’s connector for Databricks, enhancing your data lakehouse experience with flexible integration.

Generative AI is an exciting new technology which is helping to democratise and accelerate data management tasks including data engineering.

Leipzig

Katharinenstrasse 15 | 04109 | Germany

Munich

Trimburgstraße 2 | 81249 | Germany

San Francisco

2261 Market Street #4788 | CA 94114 | USA

Follow Us on Social Media

Our mission is to enable businesses to leverage the full potential of their data by providing a single source of truth platform to connect and manage all data.