CData Software Acquires Data Virtuality to Modernize Data Virtualization for the Enterprise

Data Virtuality brings enterprise data virtualization capabilities to CData, delivering highly-performant access to live data at any scale.

Explore how you can use the Data Virtuality Platform in different scenarios.

Learn more about the Data Virtuality Platform or to make your work with Data Virtuality even more successful.

Insights on product updates, useful guides and further informative articles.

Find insightful webinars, whitepapers and ebooks in our resource library.

Stronger together. Learn more about our partner programs.

Read, watch and learn what our customers achieved with Data Virtuality.

In our documentation you will find everything to know about our products

Read the answers to frequently asked questions about Data Virtuality.

In this on-demand webinar, we look at how a modern data architecture can help data scientists to be faster and to work more efficiently.

Learn more about us as a company, our strong partner ecosystem and our career opportunities.

How we achieve data security and transparency

We’re continuing our series on data replication types. In this blogpost, we’re talking about batch replication and its use cases.

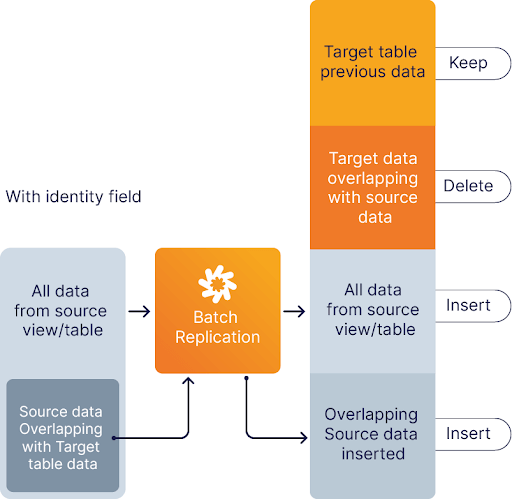

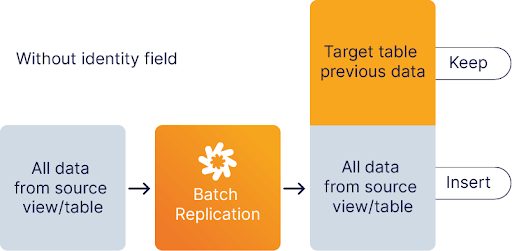

The purpose of Batch Replication is to append all data from the source view/table to the target table with the option of preventing duplicate records. Batch replication is easier to implement than incremental replication (but less flexible) and more powerful than complete replication. It also differs from both them in a numerous ways:

The option to delete duplicate records comes in handy if you work with an identity field and don’t want to track the changes. For instance, you have a list of customers with various information such as name, age, CustomerID, and date of birth. You want to update all information except for the age column because it is not important for you. With batch replication you can exactly do that.

If on the other hand you are working with financial transactions you might not want to delete any old information when you copy new information to the database so you can track the history of a particular account. This is again something that you can do through batch replication.

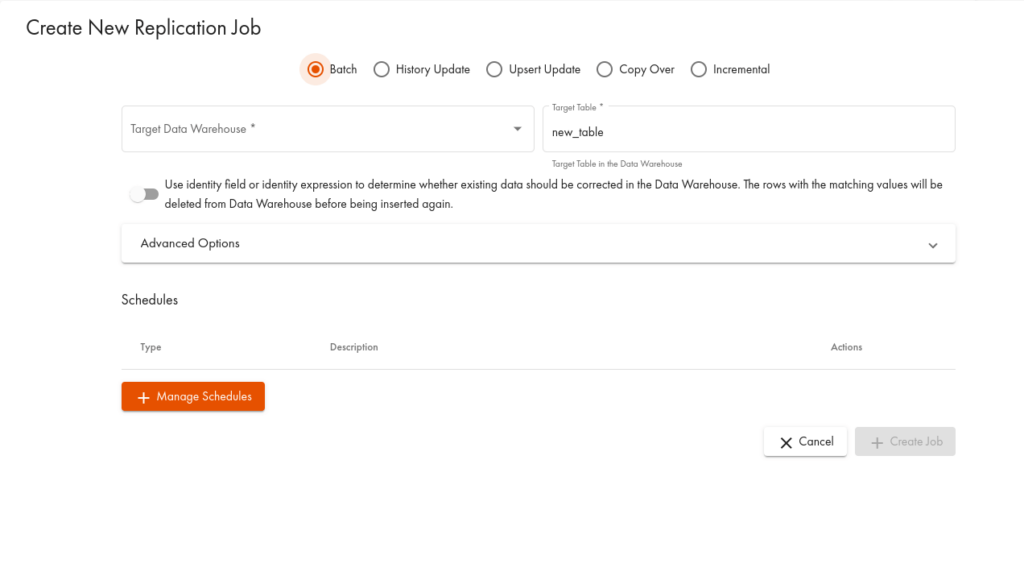

On the Data Virtuality Platform, you can do batch replication in two ways.

Our platform uniquely combines data virtualization and data replication. This gives data teams the flexibility to always choose the right method for their specific requirement. It provides high-performance real-time data integration through smarter query optimization and data historization as well as master data management through advanced ETL in a single tool.

Data Virtuality brings enterprise data virtualization capabilities to CData, delivering highly-performant access to live data at any scale.

Discover how integrating data warehouse automation with data virtualization can lead to better managed and optimized data workflows.

Discover how our ChatGPT powered SQL AI Assistant can help Data Virtuality users boost their performance when working with data.

While caching offers certain advantages, it’s not a one-size-fits-all solution. To comprehensively meet business requirements, combining data virtualization with replication is key.

Explore the potential of Data Virtuality’s connector for Databricks, enhancing your data lakehouse experience with flexible integration.

Generative AI is an exciting new technology which is helping to democratise and accelerate data management tasks including data engineering.

Leipzig

Katharinenstrasse 15 | 04109 | Germany

Munich

Trimburgstraße 2 | 81249 | Germany

San Francisco

2261 Market Street #4788 | CA 94114 | USA

Follow Us on Social Media

Our mission is to enable businesses to leverage the full potential of their data by providing a single source of truth platform to connect and manage all data.