CData Software Acquires Data Virtuality to Modernize Data Virtualization for the Enterprise

Data Virtuality brings enterprise data virtualization capabilities to CData, delivering highly-performant access to live data at any scale.

Explore how you can use the Data Virtuality Platform in different scenarios.

Learn more about the Data Virtuality Platform or to make your work with Data Virtuality even more successful.

Insights on product updates, useful guides and further informative articles.

Find insightful webinars, whitepapers and ebooks in our resource library.

Stronger together. Learn more about our partner programs.

Read, watch and learn what our customers achieved with Data Virtuality.

In our documentation you will find everything to know about our products

Read the answers to frequently asked questions about Data Virtuality.

In this on-demand webinar, we look at how a modern data architecture can help data scientists to be faster and to work more efficiently.

Learn more about us as a company, our strong partner ecosystem and our career opportunities.

How we achieve data security and transparency

Welcome to the last blogpost in our series on data virtualization in the real world. After looking at the benefits of Procedural SQL and SQL stored procedures, in this post we will be focusing on use cases for Stored Procedures in Data Virtuality.

The majority of Stored Procedure topics I’ve touched on are pretty generic and apply to all databases. But now we’re going to focus on creating stored procedures in Data Virtuality.

Let’s take a look at some use cases for stored procedures that we see repeatedly.

Stored procedures are a great way to wrap and abstract out the complexity of calling web services. The stored procedure views.getFishData below is a good example, by promoting code reuse and hiding the complexity of the Rest API.

create procedure views.getFishData(fish_name string)

returns(

"Fishery_u0020_Management" STRING,

"last_update" STRING

) as

begin

SELECT

"xmlTable.Fishery_u0020_Management",

"xmlTable.last_update"

FROM

"generic_ws".invokeHTTP(

endpoint => 'https://www.fishwatch.gov/api/species/' || replace(fish_name, ' ', '-'),

action => 'GET',

requestContentType => 'application/json'

) w,

XMLTABLE(XMLNAMESPACES( 'http://www.w3.org/2001/XMLSchema-instance' as "xsi" ),

'/root/root' PASSING JSONTOXML('root',to_chars(w.result,'UTF-8'))

COLUMNS

"Fishery_u0020_Management" STRING PATH 'Fishery_u0020_Management',

"last_update" STRING PATH 'last_update'

) "xmlTable";

end;;

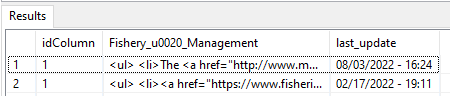

Now that we have the complexity in the stored procedure we can use SQL statements to call the stored procedure with different parameters and combine the results using a union statement.

select * from (call "views.getFishData"("fish_name" => 'Blueline-Tilefish')) as a

union all

select * from (call "views.getFishData"("fish_name" => 'Red-Snapper')) as a;;

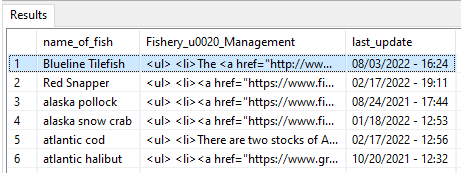

Let’s make that example data driven. First we create a table to hold the fish names.

create table dwh.fish(name_of_fish string);;

insert into dwh.fish(name_of_fish) values('Blueline Tilefish'), ('Red Snapper'), ('alaska pollock'), ('alaska snow crab'), ('atlantic cod'), ('atlantic halibut');;The SQL query below reads the name of the fishes and executes the sproc to retrieve the data. Note the keyword LATERAL, it is like a for-loop allowing for iteration. It’s not an accurate description of LATERAL, but you can effectively think of it as being a loop. Check your favorite SQL dialect for a more thorough explanation. See the reference section at the end of the document.

select

f.*

,x.*

from

dwh.fish f

,LATERAL(call "views.getFishData"(f.name_of_fish)) x;;

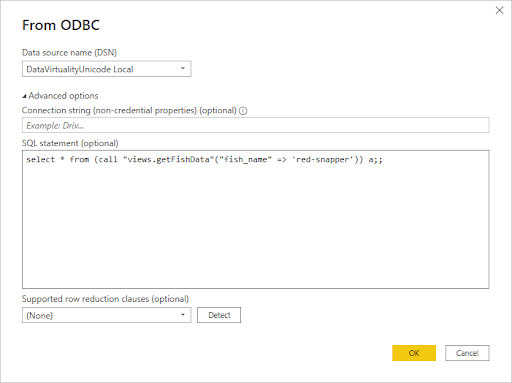

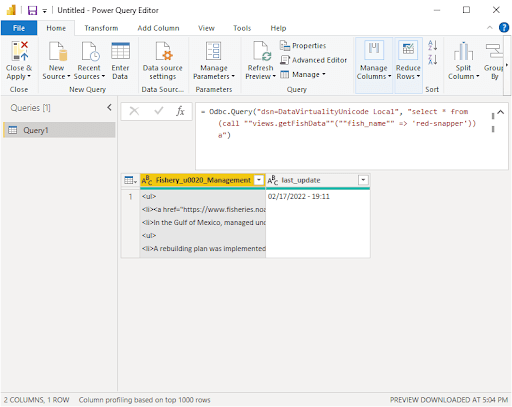

This tip comes from a coworker. Most BI tools like Power BI and Tableau allow you to execute custom SQL queries and these queries can call to stored procedures. For this example I’m going to use Power BI. When defining your connection to Power BI, click on advanced and copy the query into SQL statement box.

Clicking on Transform data will open the following dialog box and create a parameter based query.I’m not going to dive into much detail since the articles I’ve listed in references does a much better job of explaining how.

We’ve had clients transfer terabytes of data between systems. One client was experiencing a timeout because the transfer was taking several hours. The solution was quite simple, use a stored procedure to transfer the data in batches. In the example below, the batch size is 1 million rows and transfers 40 million rows each execution.

create procedure views.BatchDataTransfer()

as

begin

declare integer numruns=40 ;

declare integer i =0;

while (i<numruns)

begin atomic

INSERT into dwh.compass_owi_trans

SELECT * FROM "export_views.compass_owi_trans"

where

owi_transid > (select coalesce(max(owi_transid),-1) from dwh.compass_owi_trans )

order by owi_transid limit 1000000;

i=i+1;

end

end;;

Note the use of the keyword ATOMIC, it instructs Data Virtuality to begin a transaction. The corresponding end commits the data.

Writing this use case was very hard. Business logic is so specific to each company, so we’ll have to use a more generic example. The stored procedure below is an example of reusable code to mask column data with a language specific string.

CREATE procedure views.securityMask(lang string)

returns(masked string) as

begin

select

case

when lang = 'es' then '** restringido **'

when lang = 'de' then '** eingeschränkt **'

else '** restricted **'

end as masked

;

end;;The advantage of using a stored procedure is the code is in a single place and it is easy to modify.

My favorite example of automation is the programmatic onboarding done by a client. This isn’t the actual code, but it will help to illustrate the technique.

This procedure creates the new schema. Then assigns the appropriate permissions. Reads the SQL files from a specified folder. Replaces the placeholder text with the new schema name and executes the SQL code creating the new view or stored procedure.

create procedure views.ClientOnboarding(

new_schema string not null

) as

begin

declare string new_sql;

call "SYSADMIN.createVirtualSchema"("name" => new_schema);

EXEC "SYSADMIN.setPermissions"(

role_name => 'accounting-role',

resourceName => new_schema,

permissions => 'R',

condition => null,

isConstraint => FALSE,

mask => null,

maskOrder => null

) without return;

loop on (select * from (call "sql_local_files.getTextFiles"("pathAndPattern" => '*', "encoding" => 'UTF-8')) as a) as cur

begin

new_sql = replace(cast(cur.file as string), '<<schema>>', new_schema);

execute (new_sql) without return;

end

end;;

Onboarding a new client becomes a simple stored procedure call.

call views.ClientOnboarding('new_client');;The actual code written by our client was much more complex. It created new data sources, views, stored procedures, and set permissions. It also included unit tests verifying all of the components. They told us it saved them many hours versus doing it by hand.

There are too many topics to cover for this article and people have created many great tutorials. So I’m going to direct you to these sources for more learning. Above I mentioned that many databases support creating stored procedures in SQL language.

If you’re interested in learning about stored procedure in Data Virtuality, you can find more information here: https://documentation.datavirtuality.com/3/reference-guide/ddl-support/create-procedure

If you’re interested in learning about stored procedures for your own database server, search for:

Last interesting fact: if you connect the above databases to Data Virtuality (and also some more — the list above is not exhaustive), you can also expose the stored procedures from the connected database servers inside Data Virtuality and call them. This way you can reuse your existing SQL code without the need to reimplement it again in Data Virtuality.

We’ve seen several examples of how stored procedures can be used. I hope this highlights some of the applications of stored procedures and the flexibility it can provide. Below is a list of references you might find useful. If you’d like more information about advanced data virtualization and stored procedures

The Data Virtuality Platform is trusted by businesses around the world to help them harness the power of their data. Book a demo and test all the features of the Data Virtuality Platform in a session tailored to your use case – SaaS or on-premise.

| Topic | URL |

| Lateral Oracle | https://oracle-base.com/articles/12c/lateral-inline-views-cross-apply-and-outer-apply-joins-12cr1 |

| Lateral Postgres | https://www.postgresql.org/docs/current/queries-table-expressions.html |

| Calling stored procedures from Power BI | https://databear.com/sql-stored-procedures-in-power-bi/ |

| Calling SQL with parameters in Tableau | https://www.youtube.com/watch?v=Vu4CEP1QP_A |

Data Virtuality brings enterprise data virtualization capabilities to CData, delivering highly-performant access to live data at any scale.

Discover how integrating data warehouse automation with data virtualization can lead to better managed and optimized data workflows.

Discover how our ChatGPT powered SQL AI Assistant can help Data Virtuality users boost their performance when working with data.

While caching offers certain advantages, it’s not a one-size-fits-all solution. To comprehensively meet business requirements, combining data virtualization with replication is key.

Explore the potential of Data Virtuality’s connector for Databricks, enhancing your data lakehouse experience with flexible integration.

Generative AI is an exciting new technology which is helping to democratise and accelerate data management tasks including data engineering.

Leipzig

Katharinenstrasse 15 | 04109 | Germany

Munich

Trimburgstraße 2 | 81249 | Germany

San Francisco

2261 Market Street #4788 | CA 94114 | USA

Follow Us on Social Media

Our mission is to enable businesses to leverage the full potential of their data by providing a single source of truth platform to connect and manage all data.